ShinyItemAnalysis

provides analysis of educational tests (such as admission tests)

and its items. For demonstration purposes, 20-item dataset

GMAT

from

R

library(difNLR)

is used. You can change the dataset

(and try your own one) on page

Data.

Analysis of Total Scores

Summary Table

Histogram of Total Score

For selected cut-score, blue part of histogram shows students with total score above the cut-score, grey column shows students with Total Score equal to cut-score and red part of histogram shows students below the cut-score.

Download figureSelected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

score <- apply(data, 1, sum) # Total score

# Summary of total score

summary(score)

# Histogram

hist(score, breaks = 0:ncol(data))

Table by Score

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

score <- apply(data, 1, sum) # Total score

tosc <- sort(unique(score)) # Levels of total score

perc <- cumsum(prop.table(table(score))) # Percentiles

sura <- 100 * (tosc / max(score)) # Success rate

zsco <- sort(unique(scale(score))) # Z-score

tsco <- 50 + 10 * zsco # T-score

Traditional Item Analysis

Traditional item analysis uses proportions of correct answers or correlations to estimate item properties.

Item Difficulty/Discrimination Graph

Displayed is difficulty (red) and discrimination (blue) for all items. Items are ordered by difficulty.

Difficulty of items is estimated as percent of students who answered correctly to that item.

Discrimination is described by difference of percent correct in upper and lower third of students (Upper-Lower Index, ULI). By rule of thumb it should not be lower than 0.2 (borderline in the plot), except for very easy or very difficult items.

Download figureTraditional Item Analysis

Cronbach's alpha

Chronbach's alpha is an estimate of the reliability of a psychometric test. It is a function of the number of items in a test, the average covariance between item-pairs, and the variance of the total score (Cronbach, 1951).

Traditional Item Analysis Table

Explanation: Difficulty - Difficulty of item is estimated as percent of students who answered correctly to that item. SD - standard deviation, RIT - Pearson correlation between item and Total score, RIR - Pearson correlation between item and rest of items, ULI - Upper-Lower Index, Alpha Drop - Cronbach's alpha of test without given item.

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

# Difficulty and discrimination plot

DDplot(data)

# Table

tab <- round(data.frame(item.exam(data, discr = TRUE)[, c(4, 1, 5, 2, 3)],

psych::alpha(data)$alpha.drop[, 1]), 2)

tab

Traditional item analysis uses proportions of correct answers or correlations to estimate item properties.

Distractor Analysis

In distractor analysis, we are interested in how test takers select the correct answer and how the distractors (wrong answers) were able to function effectively by drawing the test takers away from the correct answer.

Download figure

Distractors Plot

Download figureTable with Counts

Table with Proportions

Selected R code

library(difNLR)

data(GMATtest)

data <- GMATtest[, colnames(GMATtest) != "group"]

data(GMATkey)

key <- GMATkey

# Combinations - plot for item 1 and 3 groups

plotDistractorAnalysis(data, key, num.group = 3, item = 1,

multiple.answers = T)

# Distractors - plot for item 1 and 3 groups

plotDistractorAnalysis(data, key, num.group = 3, item = 1,

multiple.answers = F)

# Table with counts and margins - item 1 and 3 groups

DA <- DistractorAnalysis(data, key, num.groups = 3)[[1]]

dcast(as.data.frame(DA), response ~ score.level, sum, margins = T, value.var = "Freq")

# Table with proportions - item 1 and 3 groups

DistractorAnalysis(data, key, num.groups = 3, p.table = T)[[1]]

tab

Logistic Regression on Total Scores

Various regression models may be fitted to describe item properties in more detail. Logistic regression can model dependency of probability of correct answer on total score by s-shaped logistic curve. Parameter b0 describes horizontal position of the fitted curve, parameter b1 describes its slope.

Plot with Estimated Logistic Curve

Points represent proportion of correct answer with respect to total score. Their size is determined by count of respondents who answered item correctly.

Download figureEquation

$$\mathrm{P}(Y = 1|X, b_0, b_1) = \mathrm{E}(Y|X, b_0, b_1) = \frac{e^{\left( b_{0} + b_1 X\right)}}{1+e^{\left( b_{0} + b_1 X\right) }} $$Table of parameters

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

score <- apply(data, 1, sum)

# Logistic model for item 1

fit <- glm(data[, 1] ~ score, family = binomial)

# Coefficients

coef(fit)

# Function for plot

fun <- function(x, b0, b1){exp(b0 + b1 * x) / (1 + exp(b0 + b1 * x))}

# Plot of estimated curve

curve(fun(x, b0 = coef(fit)[1], b1 = coef(fit)[2]), 0, 20,

xlab = "Total score",

ylab = "Probability of correct answer",

ylim = c(0, 1))

Logistic Regression on Standardized Total Scores

Various regression models may be fitted to describe item properties in more detail. Logistic regression can model dependency of probability of correct answer on standardized total score (Z-score) by s-shaped logistic curve. Parameter b0 describes horizontal position of the fitted curve (difficulty), parameter b1 describes its slope at inflection point (discrimination).

Plot with Estimated Logistic Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Download figureEquation

$$\mathrm{P}(Y = 1|Z, b_0, b_1) = \mathrm{E}(Y|Z, b_0, b_1) = \frac{e^{\left( b_{0} + b_1 Z\right) }}{1+e^{\left( b_{0} + b_1 Z\right) }} $$Table of parameters

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

stand.score <- scale(apply(data, 1, sum))

# Logistic model for item 1

fit <- glm(data[, 1] ~ stand.score, family = binomial)

# Coefficients

coef(fit)

# Function for plot

fun <- function(x, b0, b1){exp(b0 + b1 * x) / (1 + exp(b0 + b1 * x))}

# Plot of estimated curve

curve(fun(x, b0 = coef(fit)[1], b1 = coef(fit)[2]), -3, 3,

xlab = "Standardized total score",

ylab = "Probability of correct answer",

ylim = c(0, 1))

Logistic Regression on Standardized Total Scores

Various regression models may be fitted to describe item properties in more detail. Logistic regression can model dependency of probability of correct answer on standardized total score (Z-score) by s-shaped logistic curve. Note change in parametrization - the IRT parametrization used here corresponds to the parametrization used in IRT models. Parameter b describes horizontal position of the fitted curve (difficulty), parameter a describes its slope at inflection point (discrimination).

Plot with Estimated Logistic Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Download figureEquation

$$\mathrm{P}(Y = 1|Z, a, b) = \mathrm{E}(Y|Z, a, b) = \frac{e^{ a\left(Z - b\right) }}{1+e^{a\left(Z - b\right)}} $$Table of parameters

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

stand.score <- scale(apply(data, 1, sum))

# Logistic model for item 1

fit <- glm(data[, 1] ~ stand.score, family = binomial)

# Coefficients - tranformation

coef <- c(a = coef(fit)[2], b = - coef(fit)[1] / coef(fit)[2])

coef

# Function for plot

fun <- function(x, a, b){exp(a * (x - b)) / (1 + exp(a * (x - b)))}

# Plot of estimated curve

curve(fun(x, a = coef[1], b = coef[2]), -3, 3,

xlab = "Standardized total score",

ylab = "Probability of correct answer",

ylim = c(0, 1))

Nonlinear Regression on Standardized Total Scores

Various regression models may be fitted to describe item properties in more detail. Nonlinear regression can model dependency of probability of correct answer on standardized total score (Z-score) by s-shaped logistic curve. The IRT parametrization used here corresponds to the parametrization used in IRT models. Parameter b describes horizontal position of the fitted curve (difficulty), parameter a describes its slope at inflection point (discrimination). This model allows for nonzero lower left asymptote c (pseudo-guessing).

Plot with Estimated Nonlinear Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Download figureEquation

$$\mathrm{P}(Y = 1|Z, b_0, b_1, c) = \mathrm{E}(Y|Z, b_0, b_1, c) = c + \left( 1-c \right) \cdot \frac{e^{a\left(Z-b\right) }}{1+e^{a\left(Z-b\right) }} $$Table of parameters

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

stand.score <- scale(apply(data, 1, sum))

# NLR model for item 1

fun <- function(x, a, b, c){c + (1 - c) * exp(a * (x - b)) / (1 + exp(a * (x - b)))}

fit <- nls(data[, 1] ~ fun(stand.score, a, b, c), algorithm = "port",

start = startNLR(data, GMAT[, "group"])[1, 1:3])

# Coefficients

coef(fit)

# Plot of estimated curve

curve(fun(x, a = coef(fit)[1], b = coef(fit)[2], c = coef(fit)[3]), -3, 3,

xlab = "Standardized total score",

ylab = "Probability of correct answer",

ylim = c(0, 1))

Multinomial Regression on Standardized Total Scores

Various regression models may be fitted to describe item properties in more detail. Multinomial regression allows for simultaneous modelling of probability of choosing given distractors on standardized total score (Z-score).

Plot with Estimated Curves of Multinomial Regression

Points represent proportion of selected option with respect to standardized total score. Their size is determined by count of respondents who selected given option.

Download figureEquation

Table of parameters

Selected R code

library(difNLR)

library(nnet)

data(GMAT)

data.scored <- GMAT[, colnames(GMAT) != "group"]

stand.score <- scale(apply(data, 1, sum))

data(GMATtest)

data <- GMATtest[, colnames(GMATtest) != "group"]

data(GMATkey)

key <- GMATkey

# multinomial model for item 1

fit <- multinom(relevel(data[, 1], ref = paste(key[1])) ~ stand.score)

# Coefficients

coef(fit)

One Parameter Item Response Theory Model

Item Response Theory (IRT) models are mixed-effect regression models in which student ability (theta) is assumed to be a random effect and is estimated together with item parameters. Ability (theta) is often assumed to follow normal distribution.

In 1PL IRT model, all items are assumed to have the same slope in inflection point – the same discrimination a. Items can differ in location of their inflection point – in item difficulty b. More restricted version of this model, the Rasch model, assumes discrimination a is equal to 1.

Equation

$$\mathrm{P}\left(Y_{ij} = 1\vert \theta_{i}, a, b_{j} \right) = \frac{e^{a\left(\theta_{i}-b_{j}\right) }}{1+e^{a\left(\theta_{i}-b_{j}\right) }} $$Item Characteristic Curves

Download figureItem information curves

Download figureTest information function

Download figureTable of parameters

Factor Scores vs. Standardized Total Scores

Download figureSelected R code

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

# Model

fit <- rasch(data)

# Item Characteristic Curves

plot(fit)

# Item Information Curves

plot(fit, type = "IIC")

# Test Information Function

plot(fit, items = 0, type = "IIC")

# Coefficients

coef(fit)

# Factor scores vs Standardized total scores

df1 <- ltm::factor.scores(fit, return.MIvalues = T)$score.dat

FS <- as.vector(df1[, "z1"])

df2 <- df1

df2$Obs <- df2$Exp <- df2$z1 <- df2$se.z1 <- NULL

STS <- as.vector(scale(apply(df2, 1, sum)))

df <- data.frame(FS, STS)

plot(FS ~ STS, data = df,

xlab = "Standardized total score",

ylab = "Factor score")

Two Parameter Item Response Theory Model

Item Response Theory (IRT) models are mixed-effect regression models in which student ability (theta) is assumed to be a random effect and is estimated together with item parameters. Ability (theta) is often assumed to follow normal distribution.

2PL IRT model, allows for different slopes in inflection point – different discriminations a. Items can also differ in location of their inflection point – in item difficulty b.

Equation

$$\mathrm{P}\left(Y_{ij} = 1\vert \theta_{i}, a_{j}, b_{j}\right) = \frac{e^{a_{j}\left(\theta_{i}-b_{j}\right) }}{1+e^{a_{j}\left(\theta_{i}-b_{j}\right) }} $$Item Characteristic Curves

Download figureItem information curves

Download figureTest information function

Download figureTable of parameters

Factor Scores vs. Standardized Total Scores

Download figureSelected R code

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

# Model

fit <- ltm(data ~ z1)

# Item Characteristic Curves

plot(fit)

# Item Information Curves

plot(fit, type = "IIC")

# Test Information Function

plot(fit, items = 0, type = "IIC")

# Coefficients

coef(fit)

# Factor scores vs Standardized total scores

df1 <- ltm::factor.scores(fit, return.MIvalues = T)$score.dat

FS <- as.vector(df1[, "z1"])

df2 <- df1

df2$Obs <- df2$Exp <- df2$z1 <- df2$se.z1 <- NULL

STS <- as.vector(scale(apply(df2, 1, sum)))

df <- data.frame(FS, STS)

plot(FS ~ STS, data = df,

xlab = "Standardized total score",

ylab = "Factor score")

Three Parameter Item Response Theory Model

Item Response Theory (IRT) models are mixed-effect regression models in which student ability (theta) is assumed to be a random effect and is estimated together with item parameters. Ability (theta) is often assumed to follow normal distribution.

3PL IRT model, allows for different discriminations of items a, different item difficulties b, and allows also for nonzero left asymptote – pseudo-guessing c.

Equation

$$\mathrm{P}\left(Y_{ij} = 1\vert \theta_{i}, a_{j}, b_{j}, c_{j} \right) = c_{j} + \left(1 - c_{j}\right) \cdot \frac{e^{a_{j}\left(\theta_{i}-b_{j}\right) }}{1+e^{a_{j}\left(\theta_{i}-b_{j}\right) }} $$Item characterisic curves

Download figureItem information curves

Download figureTest information function

Download figureTable of parameters

Factor Scores vs. Standardized Total Scores

Download figureSelected R code

data(GMAT)

data <- GMAT[, colnames(GMAT) != "group"]

# Model

fit <- tpm(data)

# Item Characteristic Curves

plot(fit)

# Item Information Curves

plot(fit, type = "IIC")

# Test Information Function

plot(fit, items = 0, type = "IIC")

# Coefficients

coef(fit)

# Factor scores vs Standardized total scores

df1 <- ltm::factor.scores(fit, return.MIvalues = T)$score.dat

FS <- as.vector(df1[, "z1"])

df2 <- df1

df2$Obs <- df2$Exp <- df2$z1 <- df2$se.z1 <- NULL

STS <- as.vector(scale(apply(df2, 1, sum)))

df <- data.frame(FS, STS)

plot(FS ~ STS, data = df,

xlab = "Standardized total score",

ylab = "Factor score")

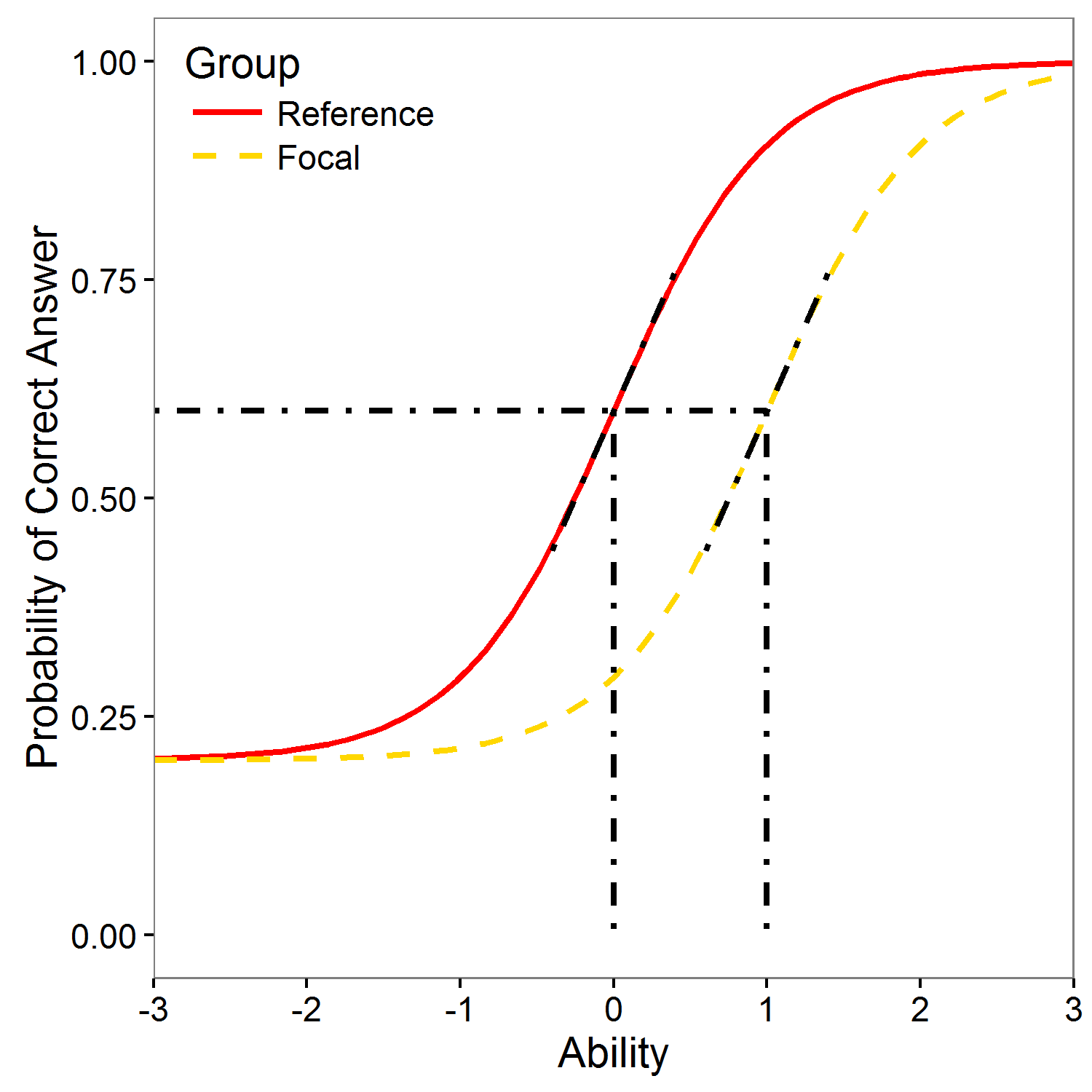

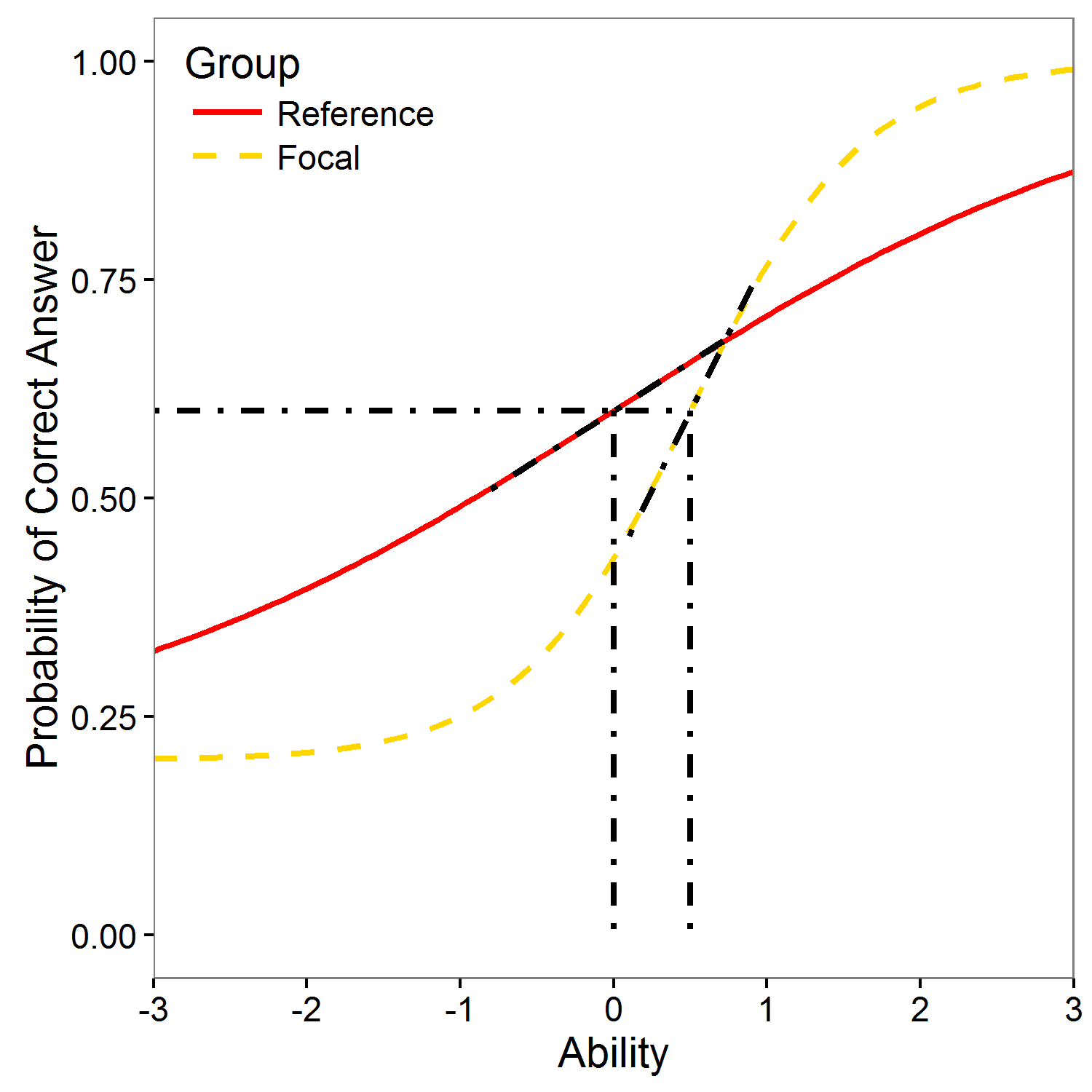

Differential Item Functioning / Item Fairness

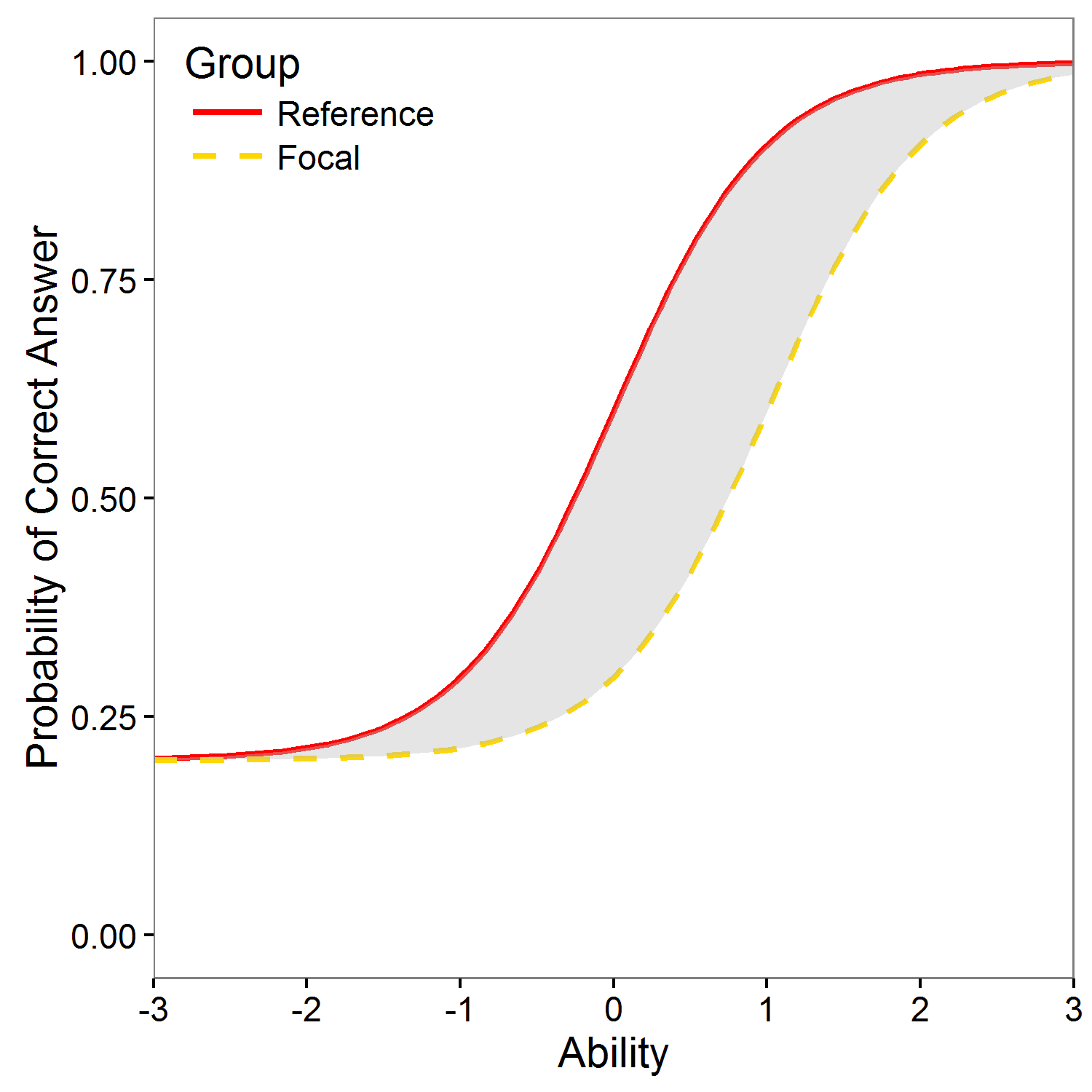

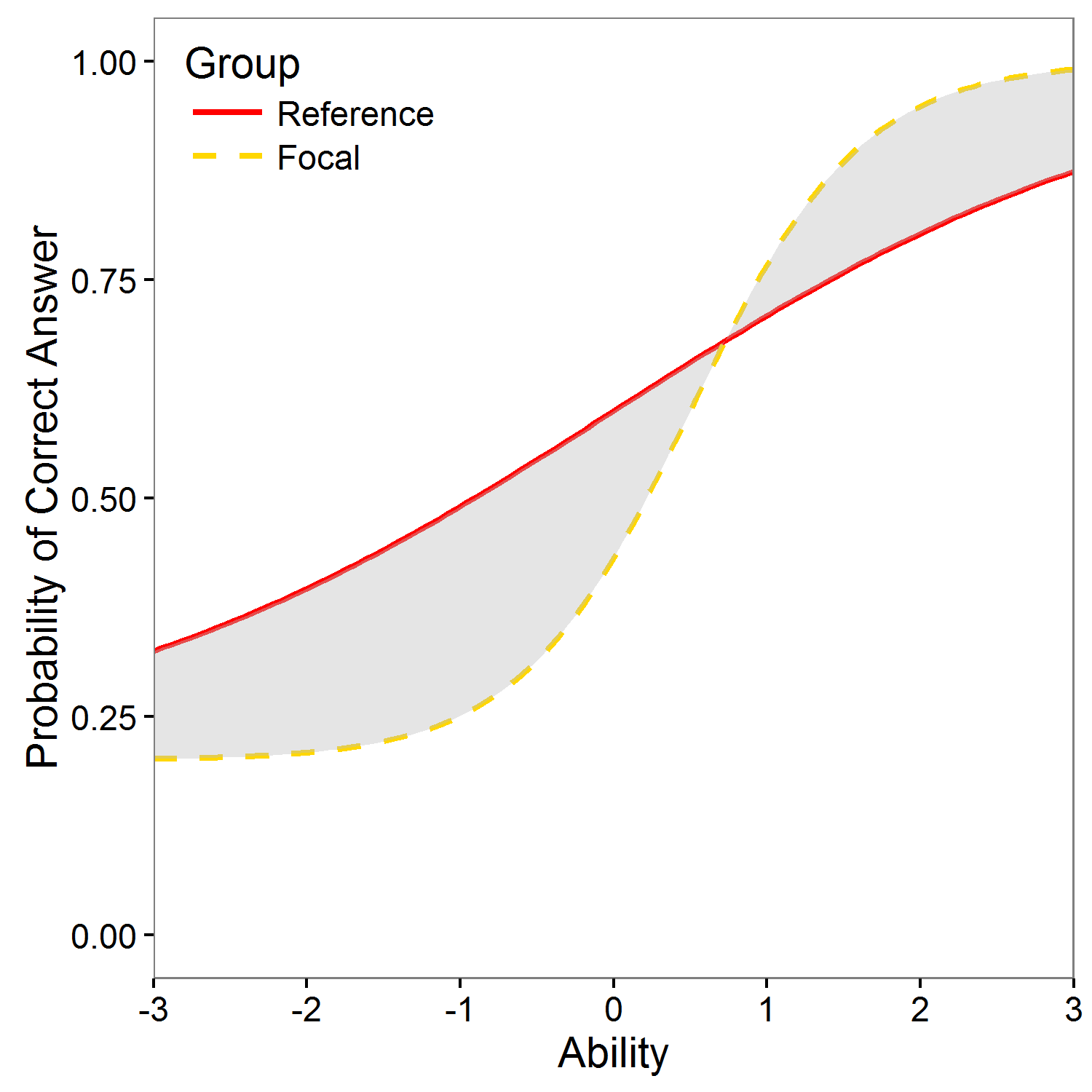

Differential item functioning (DIF) occurs when people from different groups (commonly gender or ethnicity) with the same underlying true ability have a different probability of answering the item correctly. If item functions differently for two groups, it is potentially unfair. In general, two type of DIF can be recognized: if the item has different difficulty for given two groups with the same discrimination, uniform DIF is present (left figure). If the item has different discrimination and possibly also different difficulty for given two groups, non-uniform DIF is present (right figure)

In most DIF detection methods testing procedure is performed item by item. To control family-wise error rate or false discovery rate, wide range of correction methods is offered. Default option is set to Benjamini-Hochberg (Benjamini & Hochberg, 1995).

Remember, when using your own dataset, DIF analysis is only available if you also upload group vector!

Total Scores

DIF is not about total scores! Two groups may have the same distribution of total scores, yet, some item may function differently for two groups. Also, one of the groups may have significantly lower total score, yet, it may happen that there is no DIF item!

Summary of Total Scores for Groups

Histograms of Total Scores for Groups

For selected cut-score, blue part of histogram shows students with total score above the cut-score, grey column shows students with Total Score equal to cut-score and red part of histogram shows students below the cut-score.

Download figure Download figureSelected R code

library(difNLR)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Summary table

sc_zero <- apply(data[group == 0, ], 1, sum); summary(sc_zero) # total scores of reference group

sc_one <- apply(data[group == 1, ], 1, sum); summary(sc_one) # total scores of focal group

# Histograms

hist(sc_zero, breaks = 0:20)

hist(sc_one, breaks = 0:20)

Delta Plot

Delta plot (Angoff & Ford, 1973) compares the proportions of correct answers per item in the two groups. It displays non-linear transformation of these proportions using quantiles of standard normal distributions (so called delta scores) for each item for the two genders in a scatterplot called diagonal plot or delta plot (see Figure). Item is under suspicion of DIF if the delta point considerably departs from the diagonal. The detection threshold is either fixed to value 1.5 or based on bivariate normal approximation (Magis & Facon, 2012).

Selected R code

library(difNLR)

library(deltaPlotR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Delta scores with fixed threshold

deltascores <- deltaPlot(data.frame(data, group), group = "group",

focal.name = 1, thr = 1.5)

deltascores

# Delta plot

diagPlot(deltascores, thr.draw = T)

# Delta scores with normal threshold

deltascores <- deltaPlot(data.frame(data, group), group = "group",

focal.name = 1, thr = "norm")

deltascores

# Delta plot

diagPlot(deltascores, thr.draw = T)

Mantel-Haenszel Test

Mantel-Haenszel test is DIF detection method based on contingency tables that are calculated for each level of total score (Mantel & Haenszel, 1959).

Selected R code

library(difNLR)

library(deltaPlotR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Mantel-Haenszel test

fit <- difMH(Data = data, group = group, focal.name = 1,

p.adjust.method = "BH")

fit

Mantel-Haenszel Test

Mantel-Haenszel test is DIF detection method based on contingency tables that are calculated for each level of total score (Mantel & Haenszel, 1959).

Contingency Tables

Selected R code

library(difNLR)

library(deltaPlotR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Contingency table for item 1 and score 12

df <- data.frame(data[, 1], group)

colnames(df) <- c("Answer", "Group")

df$Answer <- relevel(factor(df$Answer, labels = c("Incorrect", "Correct")), "Correct")

df$Group <- factor(df$Group, labels = c("Reference Group", "Focal Group"))

score <- apply(data, 1, sum)

df <- df[score == 12, ]

tab <- dcast(data.frame(xtabs(~ Group + Answer, data = df)),

Group ~ Answer,

value.var = "Freq",

margins = T,

fun = sum)

tab

# Mantel-Haenszel estimate of OR

fit <- difMH(Data = data, group = group, focal.name = 1,

p.adjust.method = "BH")

fit$alphaMH

Logistic regression

Logistic regression allows for detection of uniform and non-uniform DIF (Swaminathan & Rogers, 1990) by adding a group specific intercept b2 (uniform DIF) and group specific interaction b3 (non-uniform DIF) into model and by testing for their significance.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | X_i, G_i, b_0, b_1, b_2, b_3\right) = \frac{e^{b_0 + b_1 X_i + b_2 G_i + b_3 X_i G_i}}{1+e^{b_0 + b_1 X_i + b_2 G_i + b_3 X_i G_i}} $$Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Logistic regression DIF detection method

fit <- difLogistic(Data = data, group = group, focal.name = 1,

type = "both",

p.adjust.method = "BH")

fit

Logistic regression

Logistic regression allows for detection of uniform and non-uniform DIF by adding a group specific intercept b2 (uniform DIF) and group specific interaction b3 (non-uniform DIF) into model and by testing for their significance.

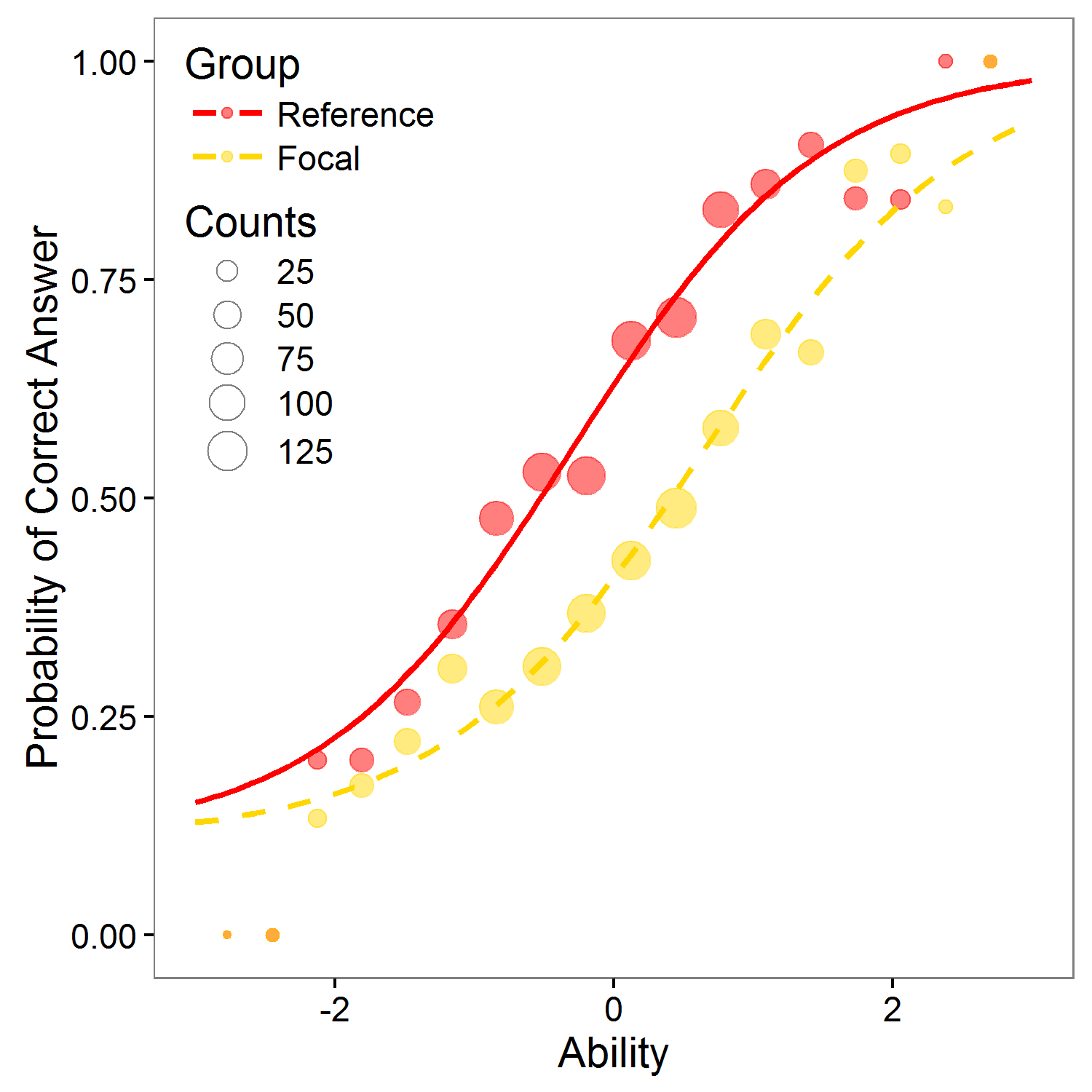

Plot with Estimated DIF Logistic Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | X_i, G_i, b_0, b_1, b_2, b_3\right) = \frac{e^{b_0 + b_1 X_i + b_2 G_i + b_3 X_i G_i}}{1+e^{b_0 + b_1 X_i + b_2 G_i + b_3 X_i G_i}} $$Table of parameters

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Logistic regression DIF detection method

fit <- difLogistic(Data = data, group = group, focal.name = 1,

type = "both",

p.adjust.method = "BH")

fit

# Plot of characteristic curve for item 1

plotDIFLogistic(data, group,

type = "both",

item = 1,

IRT = F,

p.adjust.method = "BH")

# Coefficients

fit$logitPar

Logistic regression

Logistic regression allows for detection of uniform and non-uniform DIF (Swaminathan & Rogers, 1990) by adding a group specific intercept bDIF (uniform DIF) and group specific interaction aDIF (non-uniform DIF) into model and by testing for their significance.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | Z_i, G_i, a_j, b_j, a_{\text{DIF}j}, b_{\text{DIF}j}\right) = \frac{e^{\left(a_j + a_{\text{DIF}j} G_i\right) \left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}}{1+e^{\left(a_j + a_{\text{DIF}j} G_i\right) \left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} $$Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

scaled.score <- scale(score)

# Logistic regression DIF detection method

fit <- difLogistic(Data = data, group = group, focal.name = 1,

type = "both",

match = scaled.score,

p.adjust.method = "BH")

fit

Logistic regression

Logistic regression allows for detection of uniform and non-uniform DIF by adding a group specific intercept bDIF (uniform DIF) and group specific interaction aDIF (non-uniform DIF) into model and by testing for their significance.

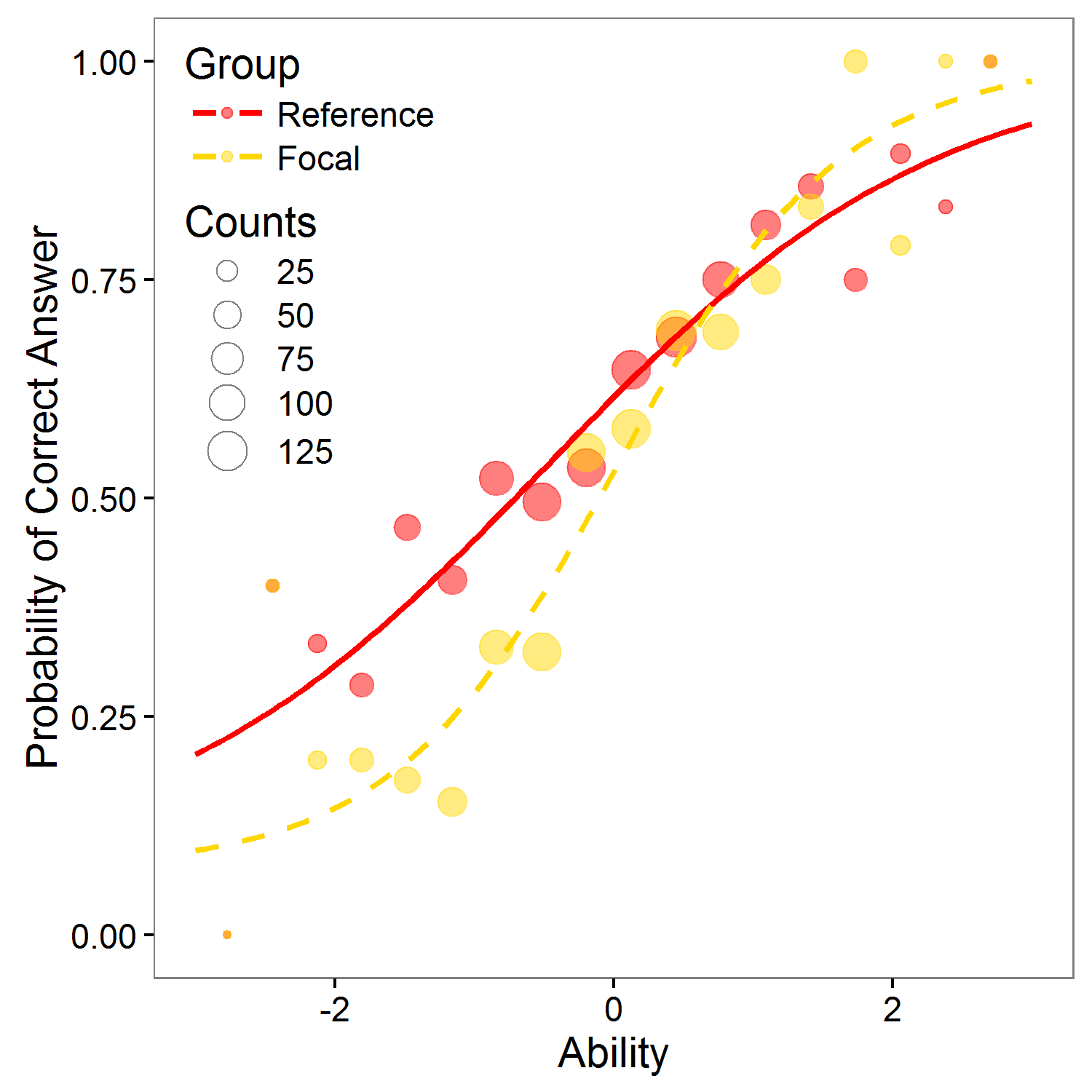

Plot with Estimated DIF Logistic Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | Z_i, G_i, a_j, b_j, a_{\text{DIF}j}, b_{\text{DIF}j}\right) = \frac{e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} {1+e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} $$Table of parameters

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

scaled.score <- scale(score)

# Logistic regression DIF detection method

fit <- difLogistic(Data = data, group = group, focal.name = 1,

type = "both",

match = scaled.score,

p.adjust.method = "BH")

fit

# Plot of characteristic curve for item 1

plotDIFLogistic(data, group,

type = "both",

item = 1,

IRT = T,

p.adjust.method = "BH")

# Coefficients for item 1 - recalculation

coef_old <- fit$logitPar[1, ]

coef <- c()

# a = b1, b = -b0/b1, adif = b3, bdif = -(b1b2-b0b3)/(b1(b1+b3))

coef[1] <- coef_old[2]

coef[2] <- -(coef_old[1] / coef_old[2])

coef[3] <- coef_old[4]

coef[4] <- -(coef_old[2] * coef_old[3] + coef_old[1] * coef_old[4] ) /

(coef_old[2] * (coef_old[2] + coef_old[4]))

Nonlinear regression

Nonlinear regression model allows for nonzero lower asymptote - pseudoguessing c. Similarly to logistic regression, also nonlinear regression allows for detection of uniform and non-uniform DIF by adding a group specific intercept bDIF (uniform DIF) and group specific interaction aDIF (non-uniform DIF) into the model and by testing for their significance.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | Z_i, G_i, a_j, b_j, c_j, a_{\text{DIF}j}, b_{\text{DIF}j}\right) = c_j + \left(1 - c_j\right) \cdot \frac{e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} {1+e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} $$Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Nonlinear regression DIF method

fit <- difNLR(data = data, group = group, type = "both",

p.adjust.method = "BH")

fit

Nonlinear regression

Nonlinear regression model allows for nonzero lower asymptote - pseudoguessing c. Similarly to logistic regression, also nonlinear regression allows for detection of uniform and non-uniform DIF (Drabinova & Martinkova, 2016) by adding a group specific intercept bDIF (uniform DIF) and group specific interaction aDIF (non-uniform DIF) into the model and by testing for their significance.

Plot with Estimated DIF Nonlinear Curve

Points represent proportion of correct answer with respect to standardized total score. Their size is determined by count of respondents who answered item correctly.

Equation

$$\mathrm{P}\left(Y_{ij} = 1 | Z_i, G_i, a_j, b_j, c_j, a_{\text{DIF}j}, b_{\text{DIF}j}\right) = c_j + \left(1 - c_j\right) \cdot \frac{e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} {1+e^{\left(a_j + a_{\text{DIF}j} G_i\right)\left(Z_i -\left(b_j + b_{\text{DIF}j} G_i\right)\right)}} $$Table of parameters

Selected R code

library(difNLR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# Nonlinear regression DIF method

fit <- difNLR(data = data, group = group, type = "both",

p.adjust.method = "BH")

# Plot of characteristic curve of item 1

plot(fit, item = 1)

# Coefficients

fit$coef

Lord Test

Lord test (Lord, 1980) is based on IRT model (1PL, 2PL, or 3PL with the same guessing). It uses the difference between item parameters for the two groups to detect DIF. In statistical terms, Lord statistic is equal to Wald statistic.

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# 2PL IRT MODEL

fit <- difLord(Data = data, group = group, focal.name = 1,

model = "2PL",

p.adjust.method = "BH")

fit

Lord Test

Lord test (Lord, 1980) is based on IRT model (1PL, 2PL, or 3PL with the same guessing). It uses the difference between item parameters for the two groups to detect DIF. In statistical terms, Lord statistic is equal to Wald statistic.

Plot with Estimated DIF Characteristic Curve

Equation

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# 2PL IRT MODEL

fit <- difLord(Data = data, group = group, focal.name = 1,

model = "2PL",

p.adjust.method = "BH")

fit

# Coefficients for item 1

tab_coef <- fit$itemParInit[c(1, ncol(data) + 1), 1:2]

# Plot of characteristic curve of item 1

plotDIFirt(parameters = tab_coef, item = 1)

Raju Test

Raju test (Raju, 1988, 1990) is based on IRT model (1PL, 2PL, or 3PL with the same guessing). It uses the area between the item charateristic curves for the two groups to detect DIF.

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# 2PL IRT MODEL

fit <- difRaju(Data = data, group = group, focal.name = 1,

model = "2PL",

p.adjust.method = "BH")

fit

Raju Test

Raju test (Raju, 1988, 1990) is based on IRT model (1PL, 2PL, or 3PL with the same guessing). It uses the area between the item charateristic curves for the two groups to detect DIF.

Plot with Estimated DIF Characteristic Curve

Equation

Selected R code

library(difNLR)

library(difR)

data(GMAT)

data <- GMAT[, 1:20]

group <- GMAT[, "group"]

# 2PL IRT MODEL

fit <- difRaju(Data = data, group = group, focal.name = 1,

model = "2PL",

p.adjust.method = "BH")

fit

# Coefficients for item 1

tab_coef <- fit$itemParInit[c(1, ncol(data) + 1), 1:2]

# Plot of characteristic curve of item 1

plotDIFirt(parameters = tab_coef, item = 1, test = "Raju")

Data

For demonstration purposes, 20-item dataset

GMAT

and dataset

GMATkey

from

difNLR

R package are used.

On this page, you may select one of three dataset offered in

difNLR

package or you may upload your own dataset (see below). To return to demonstration dataset,

refresh this page in your browser

(F5)

.

Used dataset

GMAT

is generated based on parameters of real Graduate Management

Admission Test (GMAT) data set (Kingston et al., 1985). However, first two items were

generated to function differently in uniform and non-uniform way respectively.

The data set represents responses of 2,000 subjects to multiple-choice test of 20 items.

The distribution of total scores is the same for both groups.

Dataset

GMAT2

is also generated based on parameters of GMAT (Kingston et al., 1985). Again,

first two items were generated to function differently in uniform and non-uniform way respectively.

The data set represents responses of 1,000 subjects to multiple-choice test of 20 items.

Dataset

Medical

is a subset of real admission test to medical school. First item was previously

detected as functioning differently. The data set represents responses of

1,407 subjects (484 males, 923 females) to multiple-choice test of 20 items. For more details of item selection see

Drabinova & Martinkova (2016).

Upload your own datasets

Main dataset should contain responses of individual students (rows) to given items (columns). Header may contain item names, no row names should be included. If responses are in ABC format, the key provides correct response for each item. If responses are scored 0-1, key is vector of 1s. Group is 0-1 vector, where 0 represents reference group and 1 represents focal group. Its length need to be the same as number of individual students in main dataset.

Data Specification

Data Check

Key (correct answers)

Scored Test

Version

ShinyItemAnalysis Version 1.0

ShinyItemAnalysis Version 0.1 is available here.

Description

ShinyItemAnalysis provides analysis of tests and their items. It is based on the Shiny R package.

Data

For demonstration purposes, practice dataset from

library(difNLR)

is used.

On page

Data

you may select your own dataset

List of Packages Used

library(CTT)

library(deltaPlotR)

library(difNLR)

library(difR)

library(foreign)

library(ggplot2)

library(gridExtra)

library(ltm)

library(moments)

library(nnet)

library(psych)

library(psychometric)

library(reshape2)

library(shiny)

library(shinyAce)

library(stringr)

Authors

Patricia Martinkova, Institute of Computer Science, Czech Academy of Sciences

Adela Drabinova

Jakub Houdek

Bug ReportsIf you discover a problem with this application please contact the project maintainer at martinkova(at)cs.cas.cz

AcknowledgmentsProject was supported by grant funded by Czech Science foundation under number GJ15-15856Y

License

Copyright 2016 Patricia Martinkova, Adela Drabinova, Ondrej Leder and Jakub Houdek

This program is free software you can redistribute it and or modify it under the terms of the GNU General Public License as published by the Free Software Foundation either version 3 of the License or at your option any later version.

This program is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

References

Angoff, W. H., & Ford, S. F. (1973). Item‐Race Interaction on a Test of Scholastic Aptitude. Journal of Educational Measurement, 10(2), 95-105.

Benjamini, Y., & Hochberg, Y. (1995). Controlling the False Discovery Rate: A Practical and Powerful Approach to Multiple Testing. Journal of the Royal Statistical Society. Series B (Methodological), 289-300.

Cronbach, L. J. (1951). Coefficient Alpha and the Internal Structure of Tests. Psychometrika, 16(3), 297-334.

Drabinova, A., & Martinkova, P. (2016). Detection of Differential Item Functioning Based on Non-Linear Regression. Technical Report V-1229 .

Lord, F. M. (1980). Applications of Item Response Theory to Practical Testing Problems. Routledge.

Magis, D., & Facon, B. (2012). Angoff's Delta Method Revisited: Improving DIF Detection under Small Samples. British Journal of Mathematical and Statistical Psychology, 65(2), 302-321.

Mantel, N., & Haenszel, W. (1959). Statistical Aspects of the Analysis of Data from Retrospective Studies. Journal of the National Cancer Institute, 22 (4), 719-748.

Swaminathan, H., & Rogers, H. J. (1990). Detecting Differential Item Functioning Using Logistic Regression Procedures. Journal of Educational Measurement, 27(4), 361-370.

Raju, N. S. (1988). The Area between Two Item Characteristic Curves. Psychometrika, 53 (4), 495-502.

Raju, N. S. (1990). Determining the Significance of Estimated Signed and Unsigned Areas between Two Item Response Functions. Applied Psychological Measurement, 14 (2), 197-207.